Implementing Continuous Monitoring and Optimization Workflows

- -->> 12. Implementing Continuous Monitoring and Optimization Workflows

What you'll learn

By systematically observing key metrics and establishing a structured approach to optimization, organizations can proactively identify bottlenecks, address issues before they escalate, and consistently refine their operations to achieve higher standards of quality and output.

What is Performance Monitoring?

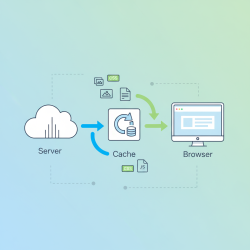

Performance monitoring involves the systematic collection, analysis, and reporting of data related to the operational health and efficiency of various components within an IT infrastructure or business process. It’s about having a clear, real-time view into how everything is functioning. This proactive approach allows teams to detect anomalies, understand trends, and gain insights into potential areas of improvement.

Effective monitoring goes beyond simply checking if a system is up or down. It delves into the specifics of resource utilization, response times, error rates, and user engagement, providing a holistic picture of performance. The goal is to move from reactive problem-solving to proactive identification and prevention, minimizing downtime and maximizing productivity.

Key Metrics to Monitor

Choosing the right metrics is crucial for effective monitoring. These metrics should align with business goals and provide actionable insights. A broad range of indicators can be tracked, depending on the specific system or application being monitored. Common categories include:

- System Resource Utilization: CPU usage, memory consumption, disk I/O, network bandwidth. High utilization often points to potential bottlenecks or capacity issues.

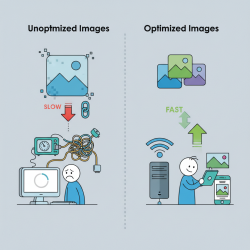

- Application Performance: Response times, transaction rates, error rates, latency, throughput. These metrics directly impact user experience and business critical operations.

- Database Performance: Query execution times, connection pool usage, slow queries, deadlocks. Database efficiency is vital for data-driven applications.

- Network Performance: Packet loss, latency, jitter, bandwidth utilization. Network issues can significantly degrade application performance.

- User Experience Metrics: Page load times, click-through rates, session duration, conversion rates. These measure the actual impact on the end-user.

Regularly reviewing these metrics against established baselines helps in identifying deviations and understanding performance trends over time.

Tools and Technologies for Monitoring

The market offers a wide array of tools designed to facilitate performance monitoring across different layers of the IT stack. These range from open-source solutions to comprehensive enterprise platforms. Many modern tools leverage artificial intelligence and machine learning to automatically detect anomalies and predict future performance issues.

Key features to look for in monitoring tools include real-time dashboards, customizable alerts, historical data retention, integration capabilities with other systems, and distributed tracing. The choice of tools often depends on the scale, complexity, and specific requirements of the environment being monitored. Popular categories include Application Performance Monitoring (APM) tools, infrastructure monitoring tools, network monitoring tools, and log management systems.

Establishing a Continuous Improvement Workflow

Monitoring data is only valuable if it leads to action. A well-defined workflow for continuous optimization ensures that insights gained from monitoring are translated into tangible improvements. This workflow should be iterative and ingrained in the operational culture.

- Identify: Use monitoring data to pinpoint specific areas of underperformance or potential issues. This might involve setting up alerts for thresholds or regularly reviewing dashboards.

- Analyze: Investigate the root cause of identified problems. This often requires deep dives into logs, tracing requests, and correlating data from various sources.

- Plan: Develop a strategy to address the root cause, outlining specific actions, responsible parties, and expected outcomes. Prioritize improvements based on impact and feasibility.

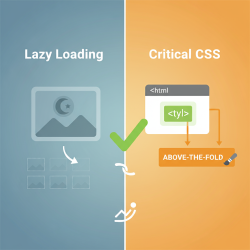

- Implement: Execute the planned changes. This could involve code optimization, infrastructure upgrades, configuration adjustments, or process refinements.

- Monitor & Verify: After implementing changes, continue to monitor the relevant metrics closely to ensure the improvements have had the desired effect and haven't introduced new issues.

- Review & Refine: Regularly review the overall process, collect feedback, and identify opportunities to make the monitoring and improvement workflow itself more efficient.

This cyclical approach ensures that optimization is an ongoing process, not a one-off project.

The Role of Data Analysis

Beyond simply collecting data, robust data analysis is critical. It involves transforming raw monitoring data into meaningful insights that can drive informed decisions. Trends, patterns, and correlations can reveal underlying system behaviors that might not be immediately obvious. Predictive analytics, for instance, can help anticipate future performance degradation based on historical data.

Visualizations, such as dashboards and graphs, play a crucial role in making complex data understandable and accessible to various stakeholders. Regular reporting on key performance indicators (KPIs) helps maintain visibility and accountability across teams.

Culture of Optimization

Ultimately, successful continuous improvement hinges on fostering a culture where optimization is a shared responsibility. This means encouraging open communication, promoting a learning mindset, and empowering teams to experiment and innovate. Performance goals should be clearly communicated and integrated into team objectives.

Regular feedback loops, both within teams and with end-users, are vital for understanding the real-world impact of performance changes. Celebrating successes and learning from failures reinforces the value of continuous effort.

Summary

Establishing effective performance monitoring and a robust continuous improvement workflow is fundamental for any organization seeking operational excellence. It begins with understanding what performance monitoring entails, identifying and tracking key metrics, and leveraging appropriate tools. The data collected then feeds into a systematic workflow of identification, analysis, planning, implementation, verification, and refinement. This iterative process, supported by strong data analysis and a pervasive culture of optimization, ensures that systems and processes are continuously evolving to meet and exceed performance expectations, leading to enhanced reliability, efficiency, and user satisfaction.