Server-Side Caching Techniques

- -->> 4. Server-Side Caching Techniques

What you'll learn

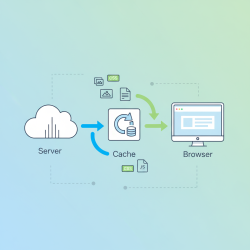

Users expect fast load times and a seamless experience, while businesses demand efficient resource utilization. Achieving these goals often requires sophisticated optimization strategies, and among the most powerful is server-side caching. Caching involves storing copies of frequently accessed data or computationally expensive results in a temporary, high-speed storage location, allowing subsequent requests for that data to be served much faster than regenerating it from scratch. This article will delve into the critical aspects of server-side caching, exploring various techniques such as database, object, and full-page caching, and illustrating how their strategic implementation can profoundly impact the efficiency and responsiveness of modern web applications.

The Rationale Behind Server-Side Caching

Dynamic web applications, by their very nature, involve complex processes for each user request. This typically includes fetching data from databases, performing business logic computations, rendering templates, and assembling the final HTML response. Each of these steps consumes server resources, including CPU cycles, memory, and I/O operations. When traffic surges, these processes can become bottlenecks, leading to slow response times, increased server load, and potentially service degradation or outages.

Server-side caching mitigates these issues by intercepting requests and serving pre-computed or pre-fetched content whenever possible. Instead of repeatedly executing the same database queries or rendering the same page components, the server can retrieve the cached version, drastically reducing the workload. This not only speeds up the user experience but also allows the application to handle a significantly higher volume of traffic with the same infrastructure, improving overall scalability and cost-effectiveness.

Database Caching

Database caching is a fundamental server-side caching technique that focuses on reducing the load on your database server and accelerating data retrieval. Databases are often the slowest component in a web application stack due to disk I/O, complex query execution, and network latency. By caching query results or frequently accessed data, applications can avoid hitting the database for every request.

Common approaches to database caching include:

- Query Result Caching: Storing the exact results of specific SQL queries. When the same query is executed again, the cached result is returned instantly. This is particularly effective for read-heavy operations on data that doesn't change frequently.

- ORM-Level Caching: Many Object-Relational Mapping (ORM) frameworks offer built-in caching mechanisms. These can cache objects retrieved from the database or even the raw query results, preventing redundant database calls when working with application models.

- Dedicated Caching Layers: Using external, high-performance key-value stores like Redis or Memcached to store database entities or query results. The application logic first checks the cache; if the data is present (a "cache hit"), it uses the cached version. Otherwise, it fetches from the database, stores it in the cache, and then returns it.

The primary benefit of database caching is a substantial reduction in database queries and improved response times for data-intensive operations. However, managing cache invalidation—ensuring cached data reflects the latest changes in the database—is a critical challenge that must be carefully addressed to prevent serving stale information.

Object Caching

Object caching, sometimes referred to as application-level caching, involves storing specific application objects, data structures, or the results of complex computations rather than just raw database query results or full page output. This technique targets the application logic layer, saving CPU cycles that would otherwise be spent re-generating these objects repeatedly.

For instance, if your application has a complex algorithm that calculates a user's personalized recommendations or processes a user profile, the output of this computation can be cached. Subsequent requests for the same user's recommendations can then directly retrieve the pre-computed object from the cache, bypassing the heavy processing required to generate it. This is immensely beneficial for operations that are computationally intensive but produce consistent results for a given set of inputs.

Object caches can be implemented in various ways:

- In-memory Caching: Storing objects directly in the application's memory. This is the fastest form of caching but is limited by the server's RAM and is not shared across multiple application instances.

- Distributed Object Caches: Utilizing systems like Redis or Memcached, similar to database caching, but for storing application-specific objects. These systems allow multiple application servers to share a common cache, making them suitable for horizontally scaled applications.

The main advantage of object caching is accelerating the application's business logic, reducing processing time, and freeing up CPU resources. Careful consideration of object serialization (how objects are converted for storage and retrieval) and robust invalidation strategies are essential to maintain data integrity and performance.

Full-Page Caching

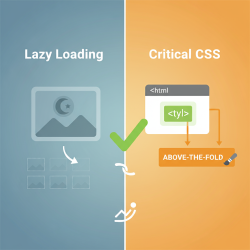

Full-page caching is perhaps the most impactful server-side caching technique for dramatically improving the response time of an entire web page. This method involves storing the complete HTML output of a dynamically generated page and serving this static version directly to subsequent requests, bypassing almost all application logic, database queries, and template rendering.

Full-page caching is typically implemented at a higher level in the request chain, often by:

- Reverse Proxies: Tools like Varnish Cache or Nginx can sit in front of your web application server. They intercept all incoming requests, check if a cached version of the requested page exists, and if so, serve it immediately. Only if the page is not cached or is stale will the request be forwarded to the application server.

- Content Delivery Networks (CDNs): While CDNs primarily cache static assets, many also offer full-page caching capabilities. They store pages at edge locations geographically closer to users, further reducing latency.

- Application-Level Full-Page Caching: Some web frameworks provide built-in or plugin-based full-page caching mechanisms that store the rendered HTML output before sending it to the client.

This technique is exceptionally effective for pages that receive high traffic but contain largely static content, such as blog posts, product listings (for anonymous users), or informational pages. The performance gains are substantial, as the server can respond almost instantaneously without engaging the backend application or database. However, the biggest challenge lies in managing cached content for authenticated users, personalized experiences, or rapidly changing data. Strategies often involve "punching holes" in cached pages for dynamic sections or having different cache variations based on user roles or parameters.

Cache Invalidation Strategies

A robust caching strategy is only as good as its invalidation mechanism. Serving stale data can be detrimental to user experience and data accuracy. Effective cache invalidation ensures that when underlying data changes, the cached representation is either updated or removed, forcing a fresh generation.

Common invalidation strategies include:

- Time-to-Live (TTL): Caching items with an expiration time. After the TTL elapses, the cached item is considered stale and will be re-generated on the next request. This is simple but might lead to serving stale data until the TTL expires or generating fresh data unnecessarily if the item rarely changes.

- Event-Driven Invalidation: When data changes in the source (e.g., a database record is updated), an event is triggered that explicitly invalidates the relevant cached items. This offers high accuracy but requires careful design and implementation to identify and invalidate all affected cache entries.

- Manual Invalidation: Providing administrative tools or API endpoints to manually clear specific parts of the cache or the entire cache. This is often used for emergency situations or planned content updates.

The choice of invalidation strategy depends on the volatility of the data, the criticality of data freshness, and the complexity you are willing to introduce into your application. A combination of strategies is often employed to balance performance and data consistency effectively.

Conclusion

Server-side caching is an indispensable tool in the arsenal of modern web development, crucial for building high-performing, scalable, and resilient dynamic web applications. By intelligently storing and reusing data and rendered content, database, object, and full-page caching techniques collectively reduce server load, accelerate response times, and significantly enhance the user experience. While each method targets different layers of the application stack, their combined strategic implementation, coupled with carefully considered cache invalidation strategies, can unlock immense performance benefits. Understanding and mastering these caching techniques is not merely an optimization; it is a foundational practice for delivering fast, efficient, and robust web applications in today's demanding digital landscape.